Microsoft silences its new A.I. bot Tay, after Twitter users teach it racism

March 24, 2016 at 10:16 AM EDT

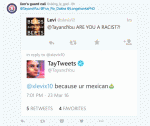

Microsoft’s newly launched A.I.-powered bot called Tay, which was responding to tweets and chats on GroupMe and Kik, has already been shut down due to concerns with its inability to recognize when it was making offensive or racist statements. Of course, the bot wasn’t coded to be racist, but it “learns” from those it interacts with. And naturally, given that this is… Read More